In Canada, environmental assessments (EAs) are completed on the promise that the decisions they generate will be grounded in findable and accessible evidence that anyone can scrutinize. Both federal and provincial governments have formally committed to open government and open science initiatives, and environmental assessment legislation emphasizes public access, transparency, and evidence-informed decision-making as core principles (Government of Canada, 2025; Office of the Chief Science Advisor of Canada, 2020). However, the seemingly straightforward promise of providing findable and accessible references to evidence is less often achieved in practice than might be expected.

My Master of Resource and Environmental Management capstone research project grew out of this provision of evidence issue and real-world frustration. During an internship with a federal regional development agency, I spent many hours reviewing environmental assessments in an attempt to verify the cited evidence and sources. This task was often much more difficult than anticipated. Many references were incomplete, links were broken, and some sources were locked behind paywalls. In tight policy development timelines, evidence that could not be quickly verified was passed over entirely. This problem compounds over time, when assessment decisions are revisited for monitoring, amendments, and accountability, long after their approval. My experience clearly demonstrated that procedural openness is not the same as evidence transparency. Documents can be and are posted publicly with inconsistently referenced or verifiable sources. These shortcomings directly undermine the ability of practitioners, researchers, and the public to consider the evidence underlying environmental decision-making. My study aimed to examine in a systematic manner the promise of open information vs. a practice gap.

What the Study Did

I used two complementary methods in this study: a policy and literature review to establish the standards that references to evidence should meet, followed by a systematic document audit to evaluate how those standards are reflected in practice. The policy review found that current environmental assessment requirements offer limited guidance regarding evidence transparency. Federally, proponents are required to provide key references, but no standard has been specified for how those references should be presented. Provincially, the bar is lower still, with most of the Atlantic Canada provinces not explicitly requiring a list of references as part of EA submissions, let alone stipulating a standard for the references. As a result, how evidence is recorded in environmental assessments varies considerably from project to project.

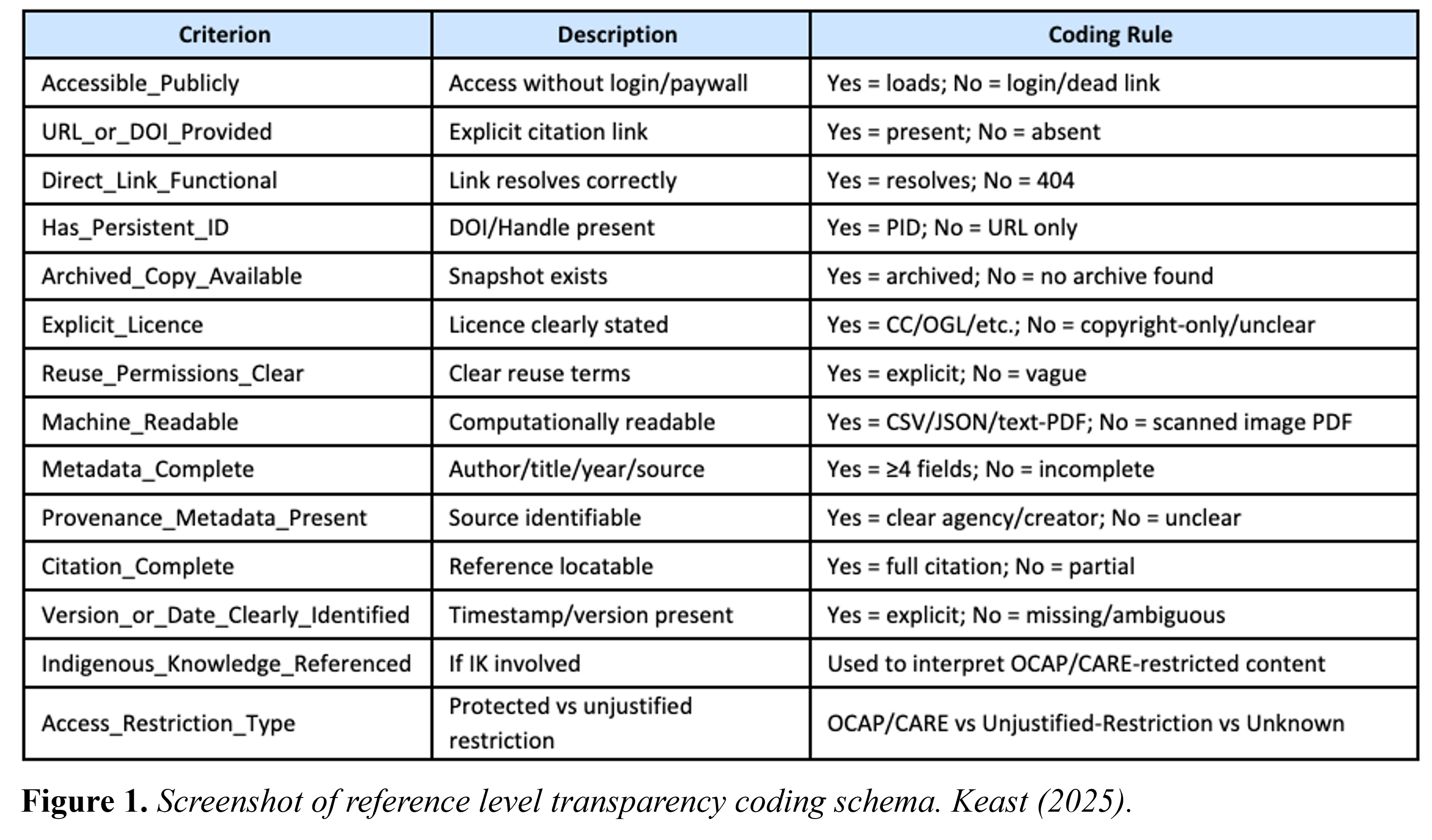

The document audit was based largely on frameworks identified in the literature review, mainly the FAIR principles – the idea that data and information should be Findable, Accessible, Interoperable, and Reusable (Wilkinson et al., 2016) – alongside the CARE (Collective Benefit, Authority to Control, Responsibility, and Ethics) and OCAP (Ownership, Control, Access, and Possession) principles (Carroll et al., 2020; First Nations Information Governance Centre [FNIGC], n.d.). I developed a structured coding framework to assess the transparency and completeness of individual references within six broad categories: accessibility, persistence, licensing and reuse clarity, machine-readability, provenance, and equity and permissions. The references were coded as “Yes,” “No,” or “Unknown” on fourteen indicators and assigned an Evidence Transparency Score from 0 to 10 (see Figure 1).

It is important to note that not all information should be open by default. CARE and OCAP principles affirm that Indigenous communities should retain authority over how their knowledge is shared and used (Carroll et al., 2020; FNIGC, n.d.), meaning that restricted access to Indigenous Knowledge should not be considered a transparency failure, but an expression of data sovereignty. The coding framework was designed to accommodate both openness and sovereignty simultaneously.

Scoping the project was revealing. Initially, I planned to compare both federal and provincial assessments that had marine impacts in Atlantic Canada. However, I quickly faced an obstacle: federal environmental assessment files were unexpectedly difficult to locate and access consistently. Recently, other researchers have also encountered this complication and noted that federal assessment registries function primarily as document repositories rather than navigable evidence systems, which limit their usefulness for comparative analysis or public scrutiny (Westwood et al., 2025). This findability problem (discovered before I began the audit) resulted in an early indicator of one of my study’s central themes.

With the difficulty of accessing federal environmental assessment files in mind, I narrowed the focus of my study to provincial assessments about green hydrogen projects with marine impacts in Nova Scotia and Newfoundland and Labrador, resulting in a final dataset of seven projects and 1,483 individual references. References to Indigenous Knowledge were assessed separately and excluded from the transparency scoring, consistent with CARE and OCAP principles (Carroll et al., 2020; FNIGC, n.d.).

What Did the Results Show?

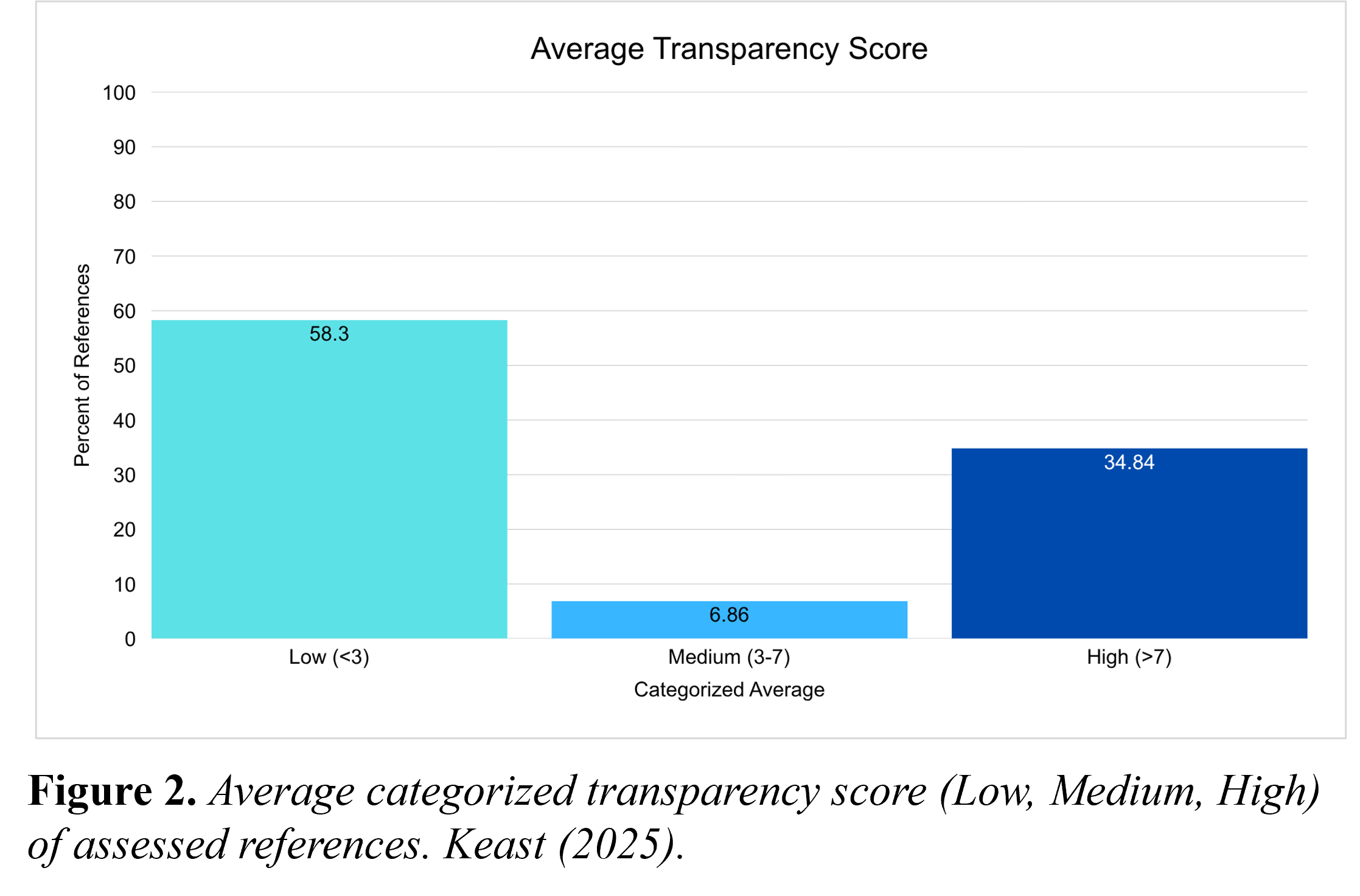

The short answer is most of the references in the seven environmental assessments fell well short of the basic transparency standard. The mean Evidence Transparency Score across the 1,458 references was just 4.36 out of 10 and the median was 2.0 out of 10. More than half of all the references (58.3%) fell into the low-transparency range, while only 34.8% scored high. The difference between the mean and median tells its own story: a group of high-scoring references pulled the overall average transparency score up, but the typical reference in this dataset tended to be poorly documented.

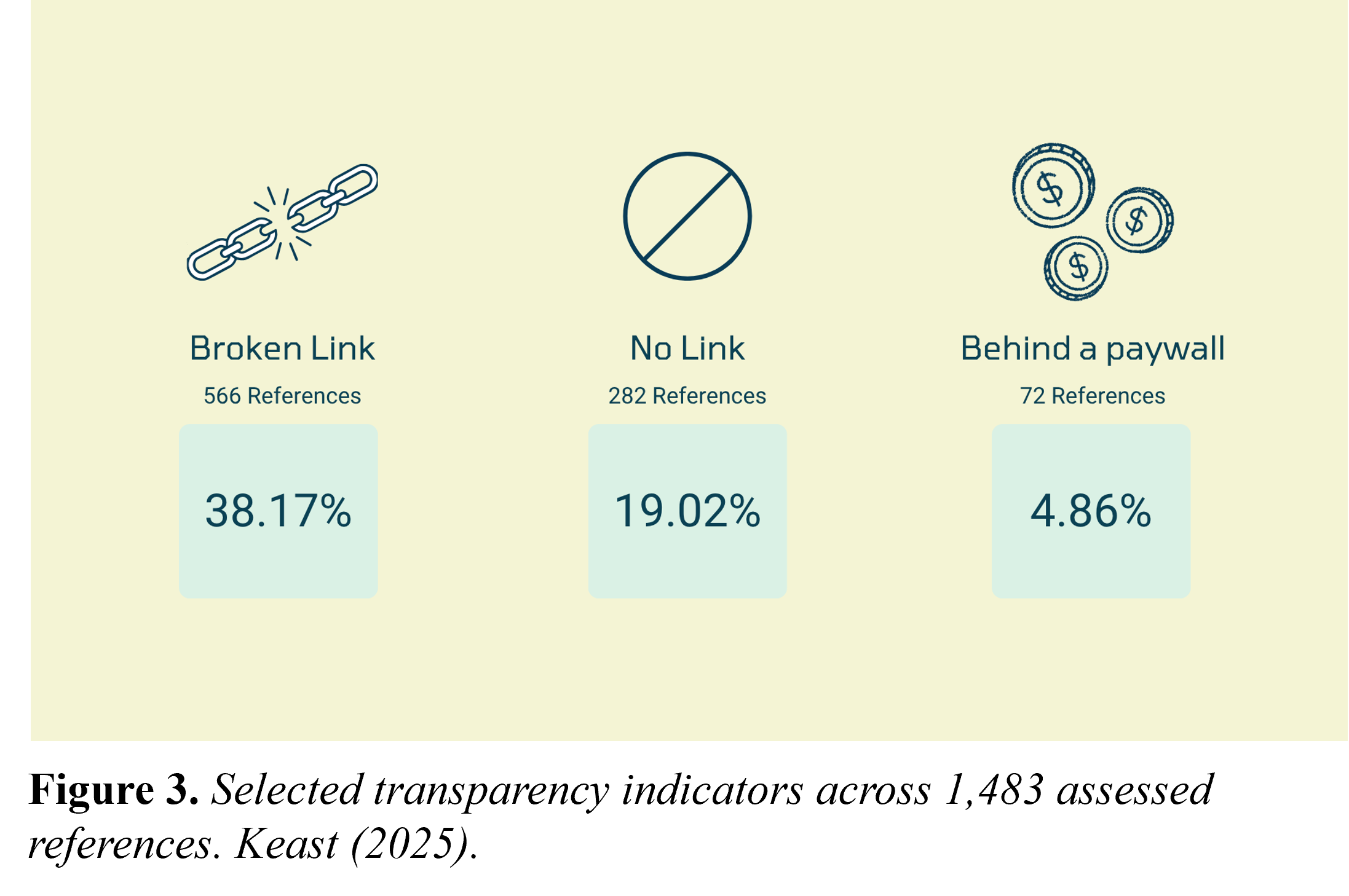

In addition, three access barriers were encountered repeatedly. Slightly more than 38% of the references contained broken or non-functional links, meaning that at the time of my study the originally cited sources were no longer reachable at the URLs included in the references. About 19% of the references included no links at all. Finally, a smaller but still substantial proportion of the cited publications were behind paywalls. Together, these barriers mean that readers attempting to verify the majority of the references in these files would face a sizable practical obstacle in doing so.

The picture becomes even clearer when specific quality indicators are noted. Only 28.2% of the references included persistent identifiers such as a DOI [digital object identifier]. Just 6.1% were available as open-access sources, while 17.1 were government documents released under open licenses. Reuse permissions were unclear for 58% of the references, not because access was explicitly restricted, but because licensing information simply was not supplied. Provenance metadata (the contextual information needed to understand where evidence came from and how it was produced) was present for only 20% of the sources. This pattern of incomplete references is consistent with broader data stewardship research that emphasizes that without persistent identifiers, standardized metadata, and clearly defined reuse conditions, evidence cannot be reliably traced or revisited over time (Brock et al., 2021; Persaud et al., 2021; Wilkinson et al., 2016).

In practice, weak documentation means that many references in the environmental assessment files were references in form but not in function. The sources were named, but verifying, reusing, or revisiting the publications over time is often impractical or impossible.

Of the 1,483 references, 25 refer to Indigenous Knowledge and, as noted, were assessed separately and not scored. The absence or unclear governance arrangements in almost every reference stood out. It was impossible to tell from the references whether restricted access reflected intentional data sovereignty protections or simply absent documentation – a distinction that I believe carries real ethical weight (Carroll et al., 2020; FNIGC, n.d.).

Why This Study Matters

The results of this study matter because environmental assessments are not simply administrative documents; they are the evidentiary basis for decisions about projects affecting coastlines, ecosystems, and communities. When the evidence included in the reports cannot be reliably located or verified, the quality of the decisions becomes difficult to defend and harder to scrutinize (Cucciniello et al., 2017; Dicks et al., 2014). This issue about evidence matters now more than ever in light of ongoing federal and provincial efforts to streamline and accelerate environmental review processes for priority projects (Government of Canada, 2026). Shorter timelines reduce the time available for reviewers and the public to scrutinize cited sources carefully (Gilmour & Stacey, 2024). Under those conditions, the cost of poor documentation rises: evidence that cannot be quickly located and assessed is evidence that effectively drops out of public review. Ultimately, untraceable evidence doesn’t just frustrate reviewers, it also erodes the public’s ability to meaningfully participate in and make informed decisions about projects that directly affect their communities and environments.

It is also worth noting that the issues identified in this study are largely technical and procedural in nature not scientific. This distinction is important, because weakly documented references are fixable. The findings do not imply that the underlying science supporting the assessments is flawed, or that proponents and practitioners were acting in bad faith. The results show that the methods used to document and share evidence were not designed with traceability or reuse in mind. With relatively modest changes to how references are formatted and reported, transparency of evidence could look very different.

Moving Forward

Based on the findings of this study, the following practical recommendations could be implemented without major regulatory changes:

- Make EA reports easier to find. Standardize how projects are publicly posted, upgrade search and browsing functionality of repositories, publish machine-readable metadata, and use persistent links and identifiers.

- Require a reference list in each Environmental Assessment/Impact Assessment submission. References must point to the location of every source used, ensuring full traceability.

- Standardize the format of references. Adopt a simple provincial standard with required fields, e.g., URLs or DOIs, and clear rules for citing datasets, maps, and web pages.

- Adopt standards-based evidence stewardship. Require FAIR-aligned evidence practices (Wilkinson et al., 2016), apply OCAP® and CARE principles (Carroll et al., 2020; FNIGC, n.d.), and adhere to open-by-default and open government standards.

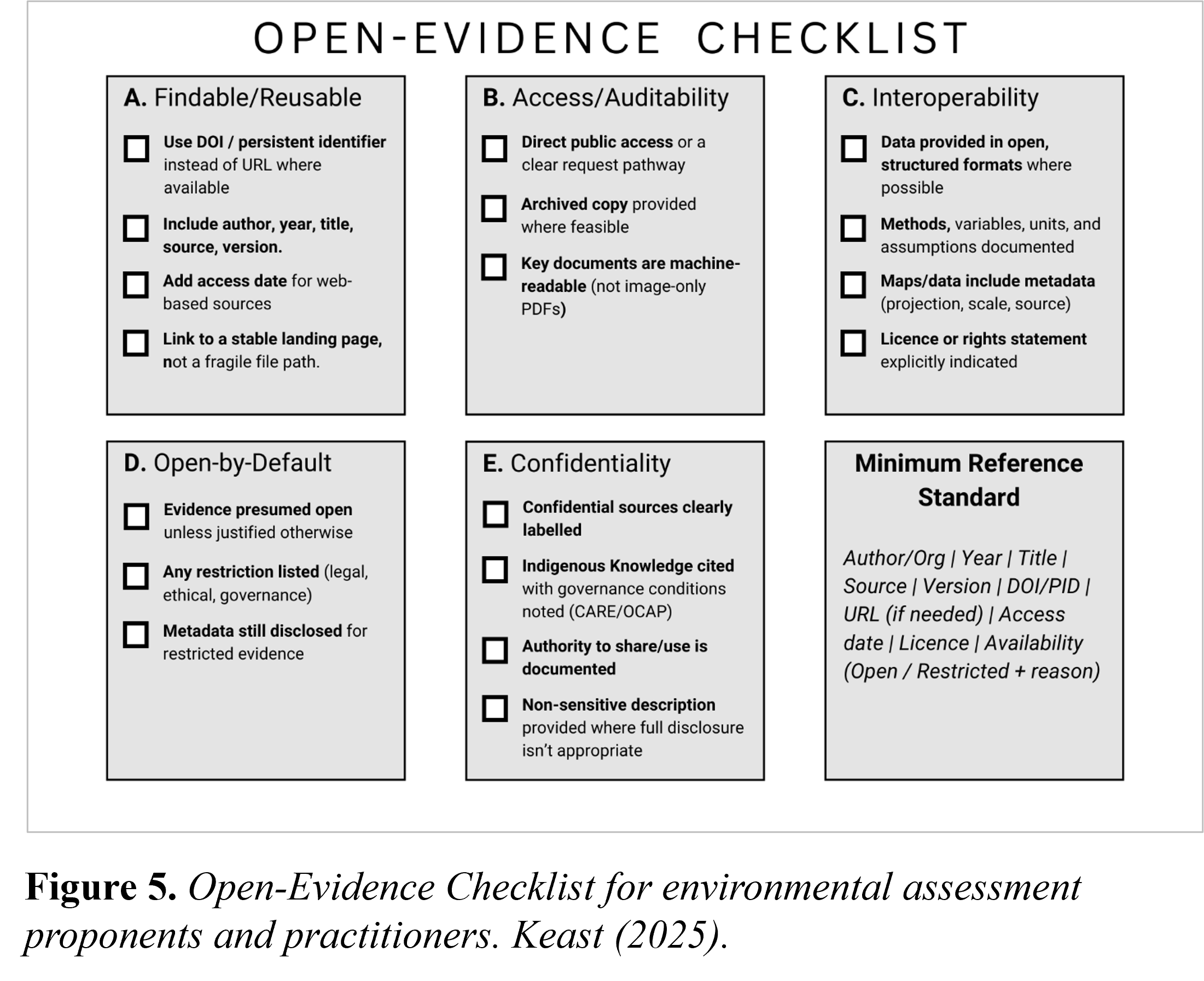

In conducting the study, I recognized that public servants and practitioners may face capacity constraints that contributed to inconsistent reference and evidence documentation. To help address this issue, I produced an open-evidence checklist as a practical, easy-to-use reference tool (see Figure 5). Organized around five key areas (findability and reuse, access and auditability, interoperability, open-by-default practices, and confidentiality) the checklist is designed to support proponents and practitioners in strengthening evidence transparency. Where evidence cannot or should not be made fully public, the checklist emphasizes clear labelling, governance documentation, and transparency about the reasons for restricted access.

The main outcome of this study is simple: while making assessment documents publicly available is important, on its own availability is an insufficient condition for transparent, evidence-based decision-making. If the evidence cited in assessment documents cannot be located, verified, or revisited, the promise of open and accountable environmental governance remains a promise lacking real commitment.

References

Brock, T. C. M., Elliott, K. C., Gladbach, A., Moermond, C., Romeis, J., Seiler, T.-B., Solomon, K., & Dohmen, G. P. (2021). Open science in regulatory environmental risk assessment. Integrated Environmental Assessment and Management, 17(6), 1229-1242. https://doi.org/10.1002/ieam.4433

Carroll, S. R., Garba, I., Figueroa-Rodríguez, O. L., Holbrook, J., Lovett, R., Materechera, S., Parsons, M., Raseroka, K., Rodriguez-Lonebear, D., Rowe, R., Sara, R., Walker, J. D., Anderson, J., & Hudson, M. (2020). The CARE principles for Indigenous data governance. Data Science Journal, 19(43), 1-12. https://doi.org/10.5334/dsj-2020-043

Cucciniello, M., Porumbescu, G. A., & Grimmelikhuijsen, S. (2017). 25 years of transparency research: Evidence and future directions. Public Administration Review, 77(1), 32-44. https://doi.org/10.1111/puar.12685

Dicks, L. V., Walsh, J. C., & Sutherland, W. J. (2014). Organising evidence for environmental management decisions: A “4S” hierarchy. Trends in Ecology & Evolution, 29(11), 607-613. https://doi.org/10.1016/j.tree.2014.09.004

First Nations Information Governance Centre. (n.d.). The First Nations principles of OCAP®. https://fnigc.ca/ocap-training/

Gilmour, T., & Stacey, J. (2024). Access to environmental justice in Canadian environmental impact assessment. FACETS, 9, 1-18. https://doi.org/10.1139/facets-2023-0118

Government of Canada. (2025, June 2). Impact Assessment Act (S.C. 2019, c. 28, s. 1). https://laws.justice.gc.ca/eng/acts/I-2.75/

Government of Canada. (2026). Major Projects Office. https://www.canada.ca/en/privy-council/major-projects-office.html

Keast, E. (2025). An audit of evidence transparency in Atlantic Canada green hydrogen projects with marine impacts [unpublished manuscript]. Dalhousie University.

Office of the Chief Science Advisor of Canada. (2020). Roadmap for open science. https://science.gc.ca/site/science/sites/default/files/attachments/2022/Roadmap-for-Open-Science.pdf

Persaud, B. D., Dukacz, K. A., Saha, G. C., Peterson, A., Moradi, L., O’Hearn, S., Clary, E., Mai, J., Steeleworthy, M., Venkiteswaran, J. J., Kheyrollah Pour, H., Wolfe, B. B., Carey, S. K., Pomeroy, J. W., DeBeer, C. M., Waddington, J. M., Van Cappellen, P., & Lin, J. (2021). Ten best practices to strengthen stewardship and sharing of water science data in Canada. Hydrological Processes, 35(11), e14385. https://doi.org/10.1002/hyp.14385

Westwood, A. R., Mines, S. M., Miglani, S., Sharan, R., Legault, A., Fitzpatrick, P., Sergeant, C. J., & Collison, B. R. (2025). Unearthing trends in environmental impact assessments for mines and quarries across Canada. FACETS, 10, 1-22. https://doi.org/10.1139/facets-2025-0114

Wilkinson, M. D., Dumontier, M., Aalbersberg, I. J., Appleton, G., Axton, M., Baak, A., Blomberg, N., Boiten, J.-W., da Silva Santos, L. B., Bourne, P. E., Bouwman, J., Brookes, A. J., Clark, T., Crosas, M., Dillo, I., Dumon, O., Edmunds, S., Evelo, C. T., Finkers, R.,…Mons, B. (2016). The FAIR guiding principles for scientific data management and stewardship. Scientific Data, 3, 160018. https://doi.org/10.1038/sdata.2016.18

Author: Erin Keast

Erin Keast is a 2026 graduate of the Master of Resource and Environmental Management program offered by the Dalhousie University School for Resource and Environmental Studies. This blog entry is based on her capstone research, which examined evidence transparency and data stewardship in environmental assessments for green hydrogen projects with marine impacts in Atlantic Canada.

Images: All of the figures in this post were prepared by E. Keast.

Tags: Grey Literature; Information Use & Influence; Scientific Communication